- Midjourney Prompts are becoming more complex, demanding higher computational power.

- Current compute infrastructures are facing bottlenecks due to increased demand from AI applications.

- Custom silicon architectures, such as those developed by leading tech firms, aim to address these bottlenecks.

- Optimized silicon solutions could drastically improve efficiency and performance for users leveraging Midjourney Prompts.

- Exploration of dedicated AI accelerators is on the rise to sustain the growing enthusiasm around AI-driven creativity.

“In the AI era, proprietary data is your only moat. Everything else is a commodity.”

What is the Core Trend Driving the Midjourney Prompts Face Compute Revolution?

In the sprawling corridors of Silicon Valley, there is a hushed excitement that surrounds Midjourney’s latest venture into AI-driven image generation. This revolution in prompts faces computation isn’t just another technological advancement; it marks a fundamental shift in how we understand and utilize AI-driven creativity. The essence? Transforming natural language prompts into highly detailed images, transcending the boundaries of artistic endeavors and opening doors to an entire universe of commercial applications.

But there’s more to this than meets the eye. As demand swells, so does the need for computational resources. We are reaching unprecedented levels of data processing requirements, creating massive compute bottlenecks. In response, firms are pivoting towards custom silicon architectures to meet these demands. Such hardware customization is not just a technical necessity; it’s becoming a strategic advantage in the tech landscape.

“The demand for compute resources in AI has risen by a factor of 300,000 over a decade, equaling continuous doubling approximately every 3.4 months.” – OpenAI

How Does It Work in Real-World Applications?

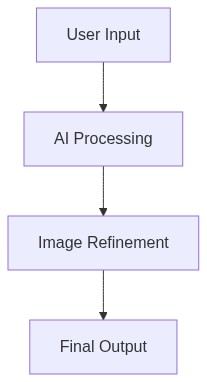

The journey from prompt to pixel isn’t as simple as it seems. Midjourney leverages complex algorithms that translate descriptive text into visually cohesive and contextually relevant images. The magic happens within the neural networks – specifically, the way they encode, decode, and transform input data.

The compute heavy-lifting is typically handled by Graphics Processing Units (GPUs) or, increasingly more common, Tensor Processing Units (TPUs). Yet, challenges arise when scaling these processes to handle the global demand. That’s where custom silicon architectures come in. These are designed to optimize specific workloads and dramatically enhance efficiency, cutting down processing time to mere fractions of what general-purpose processors take.

The Tool Stack Revolutionizing AI Image Generation

- NVIDIA DGX-1 This AI supercomputer is pivotal in training large datasets needed for image recognition and generation. Equipped with cutting-edge GPUs, it boasts capabilities that turn billions of data variables into precise output.

- Graphcore IPU Designed for AI workloads, Graphcore’s Intelligence Processing Units are specialized for handling the compute-intensive tasks that AI requires, offering significant improvements in both speed and efficiency.

- TensorFlow An open-source machine learning platform widely recognized for its versatility in handling complex computations, indispensable for developers operating in AI-focused projects.

- PyTorch Renowned for its simplicity and flexibility, PyTorch is favored among AI researchers for intuitive APIs that streamline prototyping and experiments with neural networks.

“Optimizing AI workloads through custom silicon can lead to a 5–10x efficiency improvement, making it central to harnessing AI’s potential.” – Microsoft

What Steps Should Individuals Take?

Step 1 Commit to learning specialized AI development tools. Master platforms like TensorFlow or PyTorch to enhance your competitive edge.

Step 2 Embrace community and collaboration. Platforms like GitHub offer a wealth of shared knowledge and code that can accelerate your learning curve significantly.

How Can Businesses Leverage This Revolution?

Step 1 Evaluate your current compute infrastructure. Upgrading to custom silicon solutions may offer substantial gains in efficiency and cost-effectiveness.

Step 2 Invest in AI capabilities by partnering with firms that specialize in AI training and deployment. A strategic partnership can lead to better product innovation and market positioning.

Step 3 Focus on scalability. Ensure that your technology stack is capable of handling future increases in demand without disrupting service quality.

| Aspect | The Old Way (Manual) | The New Way (AI/Tech) |

|---|---|---|

| Time Required for Conceptualization | 30-40 hours per project | 2-3 hours (using AI-based tools) |

| Time Required for Revisions | 10-15 hours per revision cycle | 30 minutes – 1 hour (instant adjustments) |

| Cost of Implementation | $5,000 – $10,000 per project (manual labor) | $500 – $1,000 per project (subscription/software fees) |

| Number of Team Members Required | 5-10 professionals (designers, consultants) | 1-2 professionals (AI operators) |

| Speed of Prototype to Market | 3-6 months | 1-3 weeks |

| Flexibility and Adaptation | Limited (manual constraints) | Highly flexible (real-time data and models) |

| Overall Efficiency | Moderate (dependence on human input) | High (AI optimizes processes) |