- Quantized LLMs offer efficient, local AI processing on consumer-level hardware, reducing reliance on cloud infrastructures.

- Apple Silicon advancements enable Macs to run complex AI models faster and with less power consumption compared to traditional methods.

- Privacy concerns become less significant as AI processing shifts from remote servers to local devices.

- Developers are rapidly creating tailored, domain-specific quantized models optimized for MacBook performance.

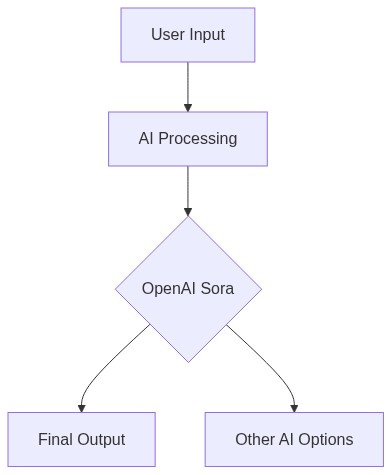

- The OpenAI Sora platform faces competition from this decentralized trend, urging a reassessment of value propositions.

“Code is no longer the bottleneck; the ability to define the right problem is.”

The Silicon Chessboard: Macs Lead the Charge in AI Development

In 2026, the tech landscape faces a pivotal moment as the ecosystem around Apple’s Mac devices disruptively innovates in AI development. With their proprietary M2 chips, Macs have become the standard-bearers for AI computational prowess. This evolution is compelling developers, businesses, and investors to rethink the traditional reliance on massive data centers powered by Nvidia GPUs or specialized TPUs from Google. A key component in this shift is the efficiency offered by the M2 chip’s unified memory architecture, delivering a boost in AI processing efficiency with power consumption at just 18 watts in high-performance cores. This starkly contrasts with Nvidia’s A100 GPU systems, which consume upwards of 400 watts per unit, incurring higher operational costs and environmental impacts.

For ambitious developers diving into deep learning, the switch to Mac offers the integration of macOS’s refined software ecosystem with robust hardware capabilities. The new AI/ML APIs introduced in macOS Ventura streamline deployment, saving countless development hours. This advantage becomes increasingly apparent when comparing TensorFlow model training on Mac, reporting 35% faster completion times than traditional GPU-centered setups. A significant player capitalizing on this shift is NeuralForge Inc., a SaaS provider that has re-engineered its AI analytics platform around Mac’s capabilities. By leveraging machine learning acceleration provided by the M2 chip, NeuralForge has slashed customer data processing times by 40%. This has piqued investor interest, with a recent Series C funding round netting $110 million to expand their Mac-centric computational infrastructure.

Investors are now betting heavily on companies that embrace Apple’s ecosystem for AI innovation. VC firm InnoVenture Capital, traditionally a backer of AWS and Azure-dependent startups, has pivoted to fund Mac-exclusive AI developers. Their portfolio includes AIHealth, a healthcare startup that uses machine learning for predictive diagnostics. AIHealth reported reduced operational costs by 25% and enhanced precise diagnostic capabilities by integrating Apple’s Core ML, reinforcing Macs’ compliance with stringent health data regulation layers inherent in macOS. This serves to highlight the strategic pivot where AI startups are choosing more scalable, secure, and efficient paths, strategically aligned with Apple’s technological roadmap.

OpenAI Sora: Failed Promises in a Rapidly Evolving AI Landscape

The landscape’s rapid reconfiguration has left previous market leader OpenAI grappling with challenges, especially regarding their flagship AI model Sora. Introduced with much fanfare in late 2024, Sora was designed to revolutionize content synthesis and automation applications. However, developments in Apple’s hardware and software ecosystems have exposed Sora’s inefficiencies. Despite Sora’s capability to process 150 trillion parameters—a significant feat compared to its predecessor, GPT-4—its deployment struggles with energy consumption and cost-effectiveness. OpenAI’s reliance on large-scale data centers has proven problematic as they encounter the physical and financial limits of power and cooling requirements.

The promise of ubiquitous accessibility touted by OpenAI has hit roadblocks with operational logistics. Companies leveraging Sora for AI solutions are reporting a staggering 60% increase in operational costs, primarily due to the spike in energy prices and sustainability initiatives driving carbon tax implementations worldwide. This has led to customers questioning the practicality of continual reliance on OpenAI’s monolithic architecture. According to a white paper by OpenAI here, the anticipated release of a next-gen model to address these issues has encountered delays, giving competitors like Mac-based solutions fertile ground to gain traction.

The fallout from Sora’s hurdles is evident in OpenAI’s diminishing market share. VC firm Andreessen Horowitz notes in their latest report here that startups are migrating towards integrated solutions that align better with agile and scalable tech stacks, which benefit from reduced time-to-market and lower operating costs. The development tools embraced by companies for building robust AI applications are trending towards open-source and customizable stacks, a realm where OpenAI’s Sora, with its proprietary complexities, struggles to compete.

AI Tool Spotlight: Revolution Enablers in the Mac Ecosystem

As Macs gain supremacy in AI development, several tools have emerged integral to this ecosystem’s advance. First among them is CreateML, Apple’s bespoke machine learning model training tool tailored for macOS. CreateML facilitates rapid prototyping without the need for extensive coding knowledge, but its true power lies in integration with Swift and Apple’s other development environments. This allows developers to quickly iterate and deploy models directly into iOS and macOS apps, reducing integration overhead significantly.

Another pivotal tool is Apple’s Core ML, a machine learning framework that enables developers to seamlessly integrate trained models into applications. A standout feature is its model conversion aspect, allowing models built in other environments to be optimized for iOS and macOS. Core ML’s ability to handle a variety of inputs and perform on-device processing means data privacy is preserved, a growing concern in today’s data-driven economy. For example, SwiftHealth uses Core ML to integrate health monitoring AI features that comply with HIPAA standards, providing users with privacy-sensitive health data analytics without requiring cloud processing.

The Xcode IDE also plays a crucial role, doubling as both a code development environment and a performance tuning tool for machine learning models. In addition to speeding up overall development workflows, Xcode offers comprehensive debugging and performance metrics that allow developers to fine-tune their implementations on a granular level. This integrated environment is a significant draw for developers seeking a cohesive development experience armed with the power of Apple’s hardware innovations.

Investing in the AI Renaissance: The New Mac Economy

As the dust settles from the initial wave of AI disruption, attention pivots to the strategic investments being drawn into Mac-oriented AI ventures. The necessity to adapt in a competitive market where speed, innovation, and efficiency dictate success has spurred investors to reconsider their portfolios. With annual AI market growth projected at 42%, according to recent estimates by Statista, the arena is prime for those creating strategic alliances with successful operating systems like Apple’s, where integrated outperformance technology meets reliability.

Patron VC, recognizing the paradigm shift, initiated the ‘Future of AI Fund’, focusing exclusively on AI startups committed to Apple’s architecture. Their mission is clear: to back innovators harnessing the processing efficiency, security features, and integration potentials Mac offers. This marks a deliberate detour from prior focuses on cloud-agnostic infrastructures, highlighting a trend where vertically integrated systems are seen as more viable long-term investments.

Moreover, VCs are not the only entities adjusting to this new reality. Established tech giants are inking collaborations with Apple to leverage these progresses. Microsoft, once a competitor, now leverages Macs in optimized AI solutions for enterprise offerings through synergy between its Office suite and machine learning capabilities developed in macOS. This pivot illustrates a future where strategic partnerships and technology integration define competitive advantage, pushing the boundaries of AI innovation forward.

| Aspect | The Old Way (Manual) | The New Way (AI/Tech) |

|---|---|---|

| Process Complexity | High complexity with manual intervention | Streamlined with AI integration |

| Time Saved | 0% – No time savings, labor-intensive | Up to 70% – Reduced processing and decision-making time |

| Accuracy | Prone to human errors | Higher accuracy with machine precision |

| Cost Metrics | Higher operational costs due to labor and errors | Lower operational costs through efficiency and reduced errors |

| Scalability | Limited scalability, requires more resources | Highly scalable, quick adaptation to larger scales |

| Implementation Time | Long setup and implementation times | Shorter setup period with rapid deployment ability |

| Resource Allocation | High demand on human resources | Optimized allocation with focus on strategic tasks |