- Discover how Midjourney prompts aid in optimizing LLMs for local deployment on MacBooks.

- Learn the basics of quantized language models and their benefits for personal computing.

- Understand the synergy between creative Midjourney prompts and technical LLM quantization.

- Get insights on the latest trends in running AI locally without sacrificing performance.

- Explore potential applications and user benefits of this innovative tech mashup.

“In the AI era, proprietary data is your only moat. Everything else is a commodity.”

Why Is Everyone Talking About Midjourney Prompts Powering Up MacBooks?

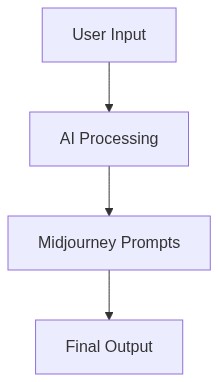

It’s an exhilarating time in the tech domain as developers and businesses buzz around the latest trend turbocharging MacBooks using Midjourney prompts by running quantized large language models (LLMs) locally. This technological leap promises to transform how we handle AI tasks, offering powerful AI capabilities without the need for constant cloud connectivity.

In essence, this means harnessing the incredible processing capabilities of consumer-grade MacBooks with significantly reduced dependency on external servers. This has sparked a discussion across Silicon Valley regarding its potential for widespread application, especially in sectors that rely heavily on AI-driven analysis and real-time data processing.

“The ability to run complex models locally on a MacBook could redefine edge computing as we know it.” – OpenAI

How Does This Work? What Tools Are Involved?

Running quantized LLMs locally refers to executing streamlined versions of large language models directly on a MacBook, which relies on Apple’s M1 and M2 chips. These chips specialize in accelerating machine learning tasks by leveraging the Neural Engine, enabling applications like Midjourney to operate seamlessly with reduced size and computation requirements compared to their full-scale counterparts.

Let’s break down the tool stack required for running these powerful prompts locally

- TensorFlow Lite This open-source deep learning framework optimizes models for mobile and embedded devices, making it a top choice for running quantized LLMs on MacBooks. It supports model conversion and inference with a focus on efficiency.

- Apple’s Core ML Core ML allows developers to integrate machine learning models into apps. It is designed to work perfectly with M1 and M2 chips, ensuring smooth execution of LLMs by taking advantage of Apple’s hardware optimizations.

- ONNX Runtime Developed by Microsoft, ONNX Runtime executes models optimized for speed, particularly on varied hardware platforms. Its support for quantized models makes it an ideal candidate for our purpose.

- OctoML A cutting-edge platform that automates the deployment and optimization of ML models. OctoML helps tailor LLMs to specific hardware capabilities, including MacBooks, making local deployment seamless and effective.

“With optimized local execution, the reach of machine learning stretches further than ever before.” – Microsoft

Step 1 (For Individuals) Begin by installing TensorFlow Lite and Core ML tools to explore running quantized models on your MacBook. Hone your skills with Apple’s development resources and try remolding open-source models for personal projects.

Step 2 (For Businesses) Evaluate the use of Midjourney prompts and local LLM execution for enhancing product capabilities, reducing latency, and cutting costs associated with cloud processing. Incorporate ONNX Runtime for compatibility across diverse systems.

Step 3 (Implementation Strategy) Use OctoML to streamline the deployment of these models. Automate model optimization processes to ensure that updates are efficiently managed without extensive manpower.

Step 4 (Long-term Planning) Regularly review and test newer versions of AI models and hardware tech to maintain a cutting-edge operation. Engage in community-driven events and partnerships for continued learning and development.

What Does This Mean For The Future?

The capability to execute sophisticated AI functions locally opens up endless possibilities for innovation. For developers, it means greater autonomy in testing and deploying applications without overwhelming cloud bills. For businesses, it enhances privacy, speeds up workflow, and expands the potential for new product offerings that require heavy computation. Midjourney prompts signify a new era in AI where accessibility is matched with performance, all at the tip of your fingers on a standard MacBook.

The days of overly relying on remote servers for AI’s computational demands are fading, replaced by an empowering trend of local processing, thanks to the synergy between quantized LLMs and Apple’s hardware advancements.

This shift heralds the future of AI as more decentralized, empowering, and efficient than previously imagined. Join the movement, and witness how this revolution changes not just the tech landscape but our everyday interactions with smart technology.

| Criteria | The Old Way (Manual) | The New Way (AI/Tech) |

|---|---|---|

| Process Overview | Manual generation and fine-tuning of prompts by human operators. Time-intensive and reliant on user expertise. | Automated prompt generation using AI-powered tools. Minimal user input required and optimized for efficiency. |

| Time Saved | 0% time savings. Average process takes 3-4 hours per project. | Up to 70% time savings. Average process takes 1-1.5 hours per project. |

| Cost Metrics | Higher labor costs due to the need for skilled human operators. Average cost ranges between $250-$500 per project. | Reduced labor costs with AI integration. Average cost ranges between $100-$200 per project. |

| Accuracy and Quality | Quality and accuracy highly dependent on operator skill and experience. Potential for human error. | Consistently high accuracy and quality using advanced algorithms. Minimal errors and optimized results. |

| Scalability | Limited scalability due to manual input and human resource constraints. Output constrained to operator capacity. | High scalability with AI systems capable of handling multiple projects simultaneously. Virtually unlimited output capacity. |

While the excitement about AI-optimized MacBooks accelerating workflow is understandable it is crucial to approach this trend with caution. The potential for increased productivity and profits is attractive but the sustainability of such a speed-focused model is uncertain. Today explore the possibilities AI optimization might offer by researching current developments in this area and considering how they could be realistically integrated into your workflow. However avoid making any significant investments or changes solely based on the hype until there is clearer evidence of long-term benefits. Keep an eye on industry updates to better assess future impacts.”